I came across this article on Drumpf 2.0 a short while ago which interprets the actions of this administration through Arendt’s understanding of stupidity; the idea that all that matters is how things appear, not what they actually do. That effect is not linked to cause, and everything is effect. I wonder if there’s a similar fundamental power in generative AI hype. The evidence continually suggests that machines cannot do what they are claimed to be able to do, that they have the appearance of being able to wipe out work, create trillions of dollars of revenue and usher in a new dark/golden age (for those who are on whichever the ‘right’ side of history is). But any degree of scrutiny shows it to be what it is; all play-acting and appearance. But maybe that’s literally all there is now?

Five Things

1. AI is inevitable because we already have it.

Two parallel ideas jumped out at me which are both framing devices used first, by governments and policy makers in AI plans and strategies and secondly by AI researchers themselves.

the myth of an inevitable pathway toward AI is created through a play with history that glorifies a seemingly present technological rupture or points at a continuation of a grand legacy, while at the same time negating the role of human agency in such technological development. (Bareis & Katzenbach, 2022, p. 867)

The grand legacy here is the way that AI is often framed in a transhistorical continuity with previous technological ‘breakthroughs’ like the Internet, smartphones, social media etc. This has the effect that, because those things are real and substantially exist, AI will too. They are in the same typology of thing; things that actually really exist and have impacted the world. This may seem obvious but, for example, the inverse to ‘It’s like the Internet, social media and smartphones’ is ‘It’s like betamax, the personal hovercraft and nuclear flight.’ At the time, these technologies were also framed as inevitable and the lineage of pre-existing technology but obviously failed. In other words, a good way of making AI seem inevitably real is to frame it in the context of other real things rather than other speculative things.

The other one comes from Agre who I mentioned re-reading last week:

AI people generally consider that their goals of mechanized intelligence are achievable for the simple reason that human beings are physically realized entities, no matter how complex or variable or sociable they might be, and AI’s fundamental commitment (again, in practice, if not always in avowed theory) is simply to the study of physically realized entities, not to production systems or symbolic programming or stored-program computers. (Agre, 1997, p. 5)

Agre’s point, from his years of being a computer scientist is that AI people thing AI is possible because humans exist. If humans can exist as ‘phsyically realized entities’ then they are made of things (plans and situated actions as Agre, borrowing from Suchman has it) and all you have to do is figure out what those things are and make them artificially. Or as Borup et al. put it:

If we accept that anticipation is actually constitutive of value, then we logically cannot differentiate between our expectations of things (biotechnologies, stem cells, nanotechnologies, etc.) and what those things in fact are. Those ‘underlying fundamentals’ are themselves future abstractions, expectant projections that alter the now, the future working back on the present. (Borup et al., 2006, p.287)

So what? The existence of one thing being used as self-evident proof of the inevitable existence of something else is a big driver of the myth of autonomous technology – that the technology ‘substantially pre-exists’ (Borup et al. 2006) out there in some latent space and needs to be brought into being with the right insights and science.

2. Got all that! Which is good and bad

I re-read Borup et al’s Sociology of Expectations. It’s not as incendiary as Agre but is a foundational thing. I’ve been struggling to find info on how users are invented by technology developers and excitingly, I saw a reference to example studies and it turns out I read them all. I sort of like that feeling of knowing that I’ve now got the concept triangulated but, as above. I hoped that there would be more out there so I don’t have to do all the heavy lifting. It seems very few people have been interested in writing about who AI developers think their users are and what they want.

So what? Please prove me wrong.

3. Judge for yourself

Sorry not sorry, it’s all PhD stuff. Constructing Audiences in Scientific Controversy (2011) from Jason Delborne helpfully brings togethee ideas I first came across in Jasanoff about the role of public and professional audiences in science. Often I find when talking about the way AI is constructed, it’s hard to demonstrate by comparison; how could the speculations, claims, bloviation and failures be framed otherwise to show people that the social construction of AI is entirely artificial?

Delborne explores the ideas through the controversy over the contamination of Mexican maize with genetically modified DNA in 2001. He talks through the initial claims and counterclaims and, importantly, beyond scientific discourse, the use of blogs and forums by marketeers and scientists. Near the end, Nature, rather than retract the various publications decide to present them all, with the letters to the editor that had been coming in and just go ‘readers, make up yourhistrr own minds.’

Nature abandoned confidence in its peer-review process and invited its reading audience to become the arbiter of truth—knowing that readers would undoubtedly come to various conclusions. (Delborne, 2011, p.84)

Delborne describes this as an important turning point where the boundary between professionals and publics are shifted – the professionals too compromised by the new media landscape and the public too invested to simply observe.

So what? Can you imagine any ‘professional authority’ on AI, be it a commercial organisation, a research institution or a governing body just saying ‘we don’t know, here’s all the evidence, judge for yourself.’ It’s in the contrast of certain inevitability presented in AI and the uncertainty and hesitancy around GM crops that an obvious contrast emerges.

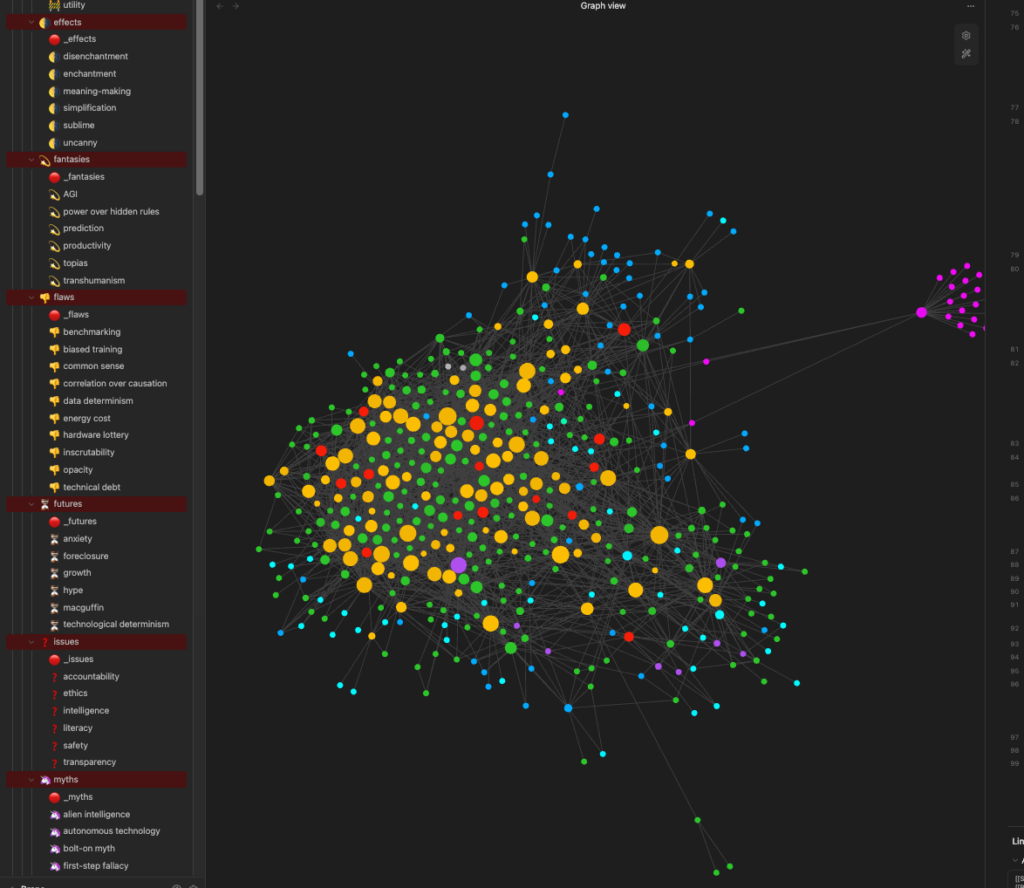

4. Rebuilding

I’m rebuilding my Obsidian vault, at least reordering a bunch of stuff. If you’ll remember, it took about a week to setup last time and it’s served me well all that time. But I’ve been having the nagging feeling that the hierarchy and some of the ways I’m thinking about a ‘concept’ (which is really what all those yellow notes are) is wrong. Some, like ‘reductionism’, ‘simplification’, ‘abstraction’, ‘universalism’ are too muddy, too mixed up in my head and I need to pull them apart line by line so I can understand better. It’s annoying, but precisely the point of the method is to carve things out as their own concept once they are significant enough. Too many mornings filing papers, not enough sorting.

Fundamentally it’s about increasing the amount of nesting, as I’ve been growing I’ve been making the stacks of stuff too big and that means that the concepts are too murky and ill-defined. I also think I’ve been protective of existing notes. For example, I didn’t want to create one on ‘government’ and so kept putting stuff about government in different concepts. Then when it comes to ‘I wonder what role government plays here?’; government is nowhere to be found.

So this week has been a process of up-and-down nesting and pulling out concept notes from other concept notes. I have a rule-of-thumb that over 50 lines is too big and needs to be split out. On the other hand I have ‘no note is too small.’ Even if it’s a single line, if it feels like its own concept, make it so.

My sort of work brain is saying this will be a waste of time, but I know this is how I research; the work of having to filter, sort, reorder, rearticulate and render all these ideas is what leads me into thinking, not just idle reading and sorting. So big tidying mission, here we go.

5. You could always AI your Obsidian

There’s been a couple of things out now about people integrating Claude into Obsidian. Claude is my go-to if I need one of the dreadful machines but I struggle to see what I would do with it in Obsidian (maybe other than better search). The whole point of arranging, curating and forming my vault is precisely so that I learn and develop. Taking shortcuts in that feels like cognitive offloading which would undermine the whole point in research and/or journaling.

Short Stuff

- Project Matador is a proposal for a massive power plant and data centre of 9GW or so. Thiis like a fun new typology of building.

- First time I’ve heard of ‘assisted evolution’ is trying to save coral reefs.

- Chinese Ruler Man

- The world’s tallest vertical farm in Singapore, 23m tall, 2000 tonnes of greens a year.

- Confirmed, combustion car sales peaked ten years ago, in 2026.

- The rich got $2tn richer last year

- Microsoft’s ‘Community First‘ data centre approach.

- Complex feeling about this. Definitely something good about bringing new disciplines to AI as a way of challenging epistemic norms, but I’m quite absolutist about not treating AI like any sort of living thing. Anyway, biologists studying LLMs.

- The creators of Warhammer have banned any staff using AI generated content and are generally nonplussed, seems to be an integrity and IP thing.

- The need for green energy for Taiwan’s semiconductor centres is destroying fishing.

- South America looks to a big materials and copper boom.

- Vibe coding is making apps disposable which maybe means a change in the way the software side of startups are working.

- They sequenced the genetic code of the Wooly Rhino from the gut of a wolf pup.

- US-Taiwan deal to reshore $250bn of chip production in the US might mean more certainty for Taiwan.

- Former NY mayor Eric Adams implicated in a rug pull, just goes to show, once again, truly is super secure compared to fiat. I find it completely baffling that anyone at this point is still advocating crypto beyond charlatans, shysters and criminals. “But it could X, Y, Z!” Again, everything under capitalism defaults to capitalism.

- Converting all the land currently used for biofuels to solar panels would generate 23 times more energy because, again, after the bicycle, solar panels are the greatest technology ever invented.

- Wikipedia is really struggling. I’d encourage you to donate. It might not help, but it also might.

- Copilot made up an event that never happened which had substantial influence on policing decisions.

The corollary thought to my opening is where this all takes everything of course. For a fleeting second a scenario resolved in my head: What if the AI bubble doesn’t burst, and it doesn’t liberate us all from work? What if this truly is the latest stage of capitalism and the big AI states, galvanised by protectionism and identity politics dig their heels in and their AI becomes a national shibboleth around which you’re either a ‘them’ or ‘us.’ What if it’s early 19th century Yorkshire with facial-recognition augmented night raids and seizures?

Ok, love you, speak later.